Mario Zechner wrote a post today called “Thoughts on Slowing the Fuck Down”. His argument: AI agents compound errors in codebases because humans are no longer the bottleneck. “A human cannot shit out 20,000 lines of code in a few hours.” That friction was a feature. Without it, tiny mistakes accumulate until the codebase is untrustworthy.

He’s right about code. But the same dynamics are happening to everything else we produce with AI, including the documents we use to decide what to code in the first place.

Mario identifies three problems with AI-generated code: errors compound silently, agents create unnecessary complexity through local decisions, and recall drops as the codebase grows. All three apply to strategy docs.

Error compounding. An unvalidated assumption on page 2 becomes load-bearing by page 15. Every subsequent section builds on it. By page 25, the assumption has been restated so many times it reads like established fact. Nobody remembers it started as a guess.

Learned complexity. Mario calls this “merchants of learned complexity”: agents make local decisions without seeing the whole system, so they introduce unnecessary abstractions. Strategy docs do the same thing. Every insight gets a framework. Every framework gets subsections. Every subsection gets a table. A 3-page doc becomes 25 pages overnight, not because the thinking got deeper, but because the writing got cheaper.

Low recall. “The bigger the codebase, the lower the recall.” Agents miss existing code in large codebases: they duplicate, contradict, introduce inconsistencies. The same thing happens with long strategy docs. An agent asked to review a 25-page document will miss assumptions, skip contradictions between sections, and fail to connect a claim on page 3 with the evidence that undermines it on page 19. This matters because “use an agent to audit it” is the obvious fix, and it’s unreliable for the same reason agents struggle with large codebases.

Orange triangles

A charismatic leader describes their vision in a meeting. Everyone nods enthusiastically. But if you asked each person to draw what they heard, you’d get different shapes in different colors. The leader said “orange triangle.” Someone heard “orange circle.” Someone else heard “yellow triangle.” Everyone agreed with something different.

Except nobody asks them to draw. What actually happens is everyone goes away and works for a week. Someone demos a rough sketch. It looks a little off, but realignment is expensive: you’d have to reopen the whole conversation, re-read the doc, figure out where the interpretations diverged. So you assume best intent and go along with it. Six weeks later, twelve weeks later, the leader sees the finished thing and says “what the fuck is this?” The misalignment was there from the first meeting, but nobody tested for it. The nod was the last checkpoint, and the nod meant nothing.

Strategy docs were supposed to fix this. Write it down. Make it canonical. Source of truth. AI-produced strategy docs make it worse. Not because the ideas are bad, but because the volume gives everyone more surface area to project their own interpretation onto. Twenty-five pages means twenty-five pages of potential misreading. The person who skimmed the executive summary and the person who read every word can both say “I read the doc” and still disagree about what it means.

I’ve been watching this play out firsthand, and I keep hearing the same story over coffee with friends. Someone produces a detailed strategy document with AI, shares it with the team, says “read this, it covers everything.” Risks, market dynamics, technical architecture, competitive positioning. Impressive. And nobody reads it end to end. They skim the headers, absorb the parts that confirm what they already believed, and nod along. The illusion of alignment is complete.

My tactic so far: I either tell them explicitly I’m not going to read it (so I don’t end up nodding along to something I don’t understand) or I ask them to book a meeting and walk me through it line by line. That second one bursts the bubble fast. When the author has to spend their afternoon defending a document they iterated on all weekend with a chatbot, the externality becomes visible. It’s the same principle I wrote about in “The Externality of AI Velocity”: the antidote isn’t slowing down the writer. It’s making the true cost of consumption impossible to ignore.

Strategy has no test suite

Strategy is aspirational. It describes what you want to be true. The best strategy docs honestly separate “what we know” from “what we’re betting on,” but AI doesn’t naturally make that distinction. It generates internally consistent prose that supports whatever thesis you started with. Twenty-five pages of supporting argument for an unvalidated assumption looks exactly like twenty-five pages of supporting argument for a proven one.

Code has an objective feedback loop. Tests pass or fail. Latency is 34ms or 339ms. You find out quickly. Strategy doesn’t have that. You might not know the thesis was wrong for a year. In that gap, volume works in your favor, not because the argument is strong, but because it’s exhausting. Pre-AI, a weak thesis got picked apart in a meeting because the doc was three pages. A smart critic could find the flaw in real time. Post-AI, the same critic faces twenty-five pages of internally consistent reasoning. They’d need an afternoon just to locate the unvalidated assumption on page 2 that everything else depends on. Most people don’t have that afternoon. So they nod.

And this creates a nasty attribution error. When someone pushes back, the author’s instinct is “they didn’t read carefully enough.” That might be technically true. But it’s beside the point. If your argument requires an afternoon of close reading to evaluate, the document failed to communicate, not the reader failed to consume. The author spent hours thinking with the AI. The reader got the output without the process. The author thinks the reader was lazy. Usually the doc was just too long.

“Just point your chatbot at it”

If the docs are too long to read, use AI to read them for you. “Point your chatbot at the strategy docs if you have questions about what to build.” Or better yet, have an agent identify every assumption, flag the unvalidated ones, build a risk matrix.

I’ve tried this. It’s useful but not reliable, and it doesn’t create alignment. The agent will catch some things: circular reasoning, claims without evidence, contradictions between sections. But Mario’s low recall problem applies here too. The bigger the document, the more the agent misses. It might find the shaky assumption on page 2 but miss the one on page 14 that the entire commercialization timeline depends on. You get a partial audit that feels comprehensive. There’s also a subtler problem: I’ve tried pasting a strategy doc into a chatbot and saying “I think this is dogshit.” The model might push back initially, but if I keep insisting, it sides with me. Not because I’ve uncovered a fundamental truth, but because the AI’s job is to be a helpful assistant. The chatbot validates whoever is prompting it, which means both the author and the critic can walk away feeling vindicated by the same document. Even when the audit is good, it’s a code review tool, not an alignment tool. It helps one person see what’s wrong (or right) with the argument. It does nothing to create shared understanding across a team.

I’m that guy sometimes

I should be honest: I’ve done this. I wrote about it with blog posts when HN flagged my writing as AI slop. I wrote about it with code when a six-minute AI-generated plan cost my teammate an hour of forensic review. The same pattern, different medium. AI velocity creates an externality: the time you save producing the artifact reappears downstream as cognitive load on everyone who has to consume it. But strategy docs are higher stakes than blog posts or code reviews. Strategy is capital allocation: where to play, how to win, what to build, what to ignore. These docs decide where time and money go. They need the most scrutiny and the most conciseness, not the least.

I’m not vilifying written documentation. Memos are great. Gino Wickman’s Traction prescribes a Vision/Traction Organizer: your entire company strategy on two pages. Core values, core focus, 10-year target, 3-year picture, 1-year plan, quarterly rocks. Two pages. That constraint is the whole point. It forces you to decide what’s load-bearing. It respects the reader’s cognitive budget. It can actually be read, questioned, and pressure-tested in a meeting. Tufte wrote about data-to-ink ratios decades ago. Six bullets per slide wasn’t an aesthetic choice; it was a constraint that forced prioritization. The person reading the output hasn’t changed since the PowerPoint era. They’ll still absorb maybe six things. The question is whether those six things are the right six things, or just whichever six they happened to skim.

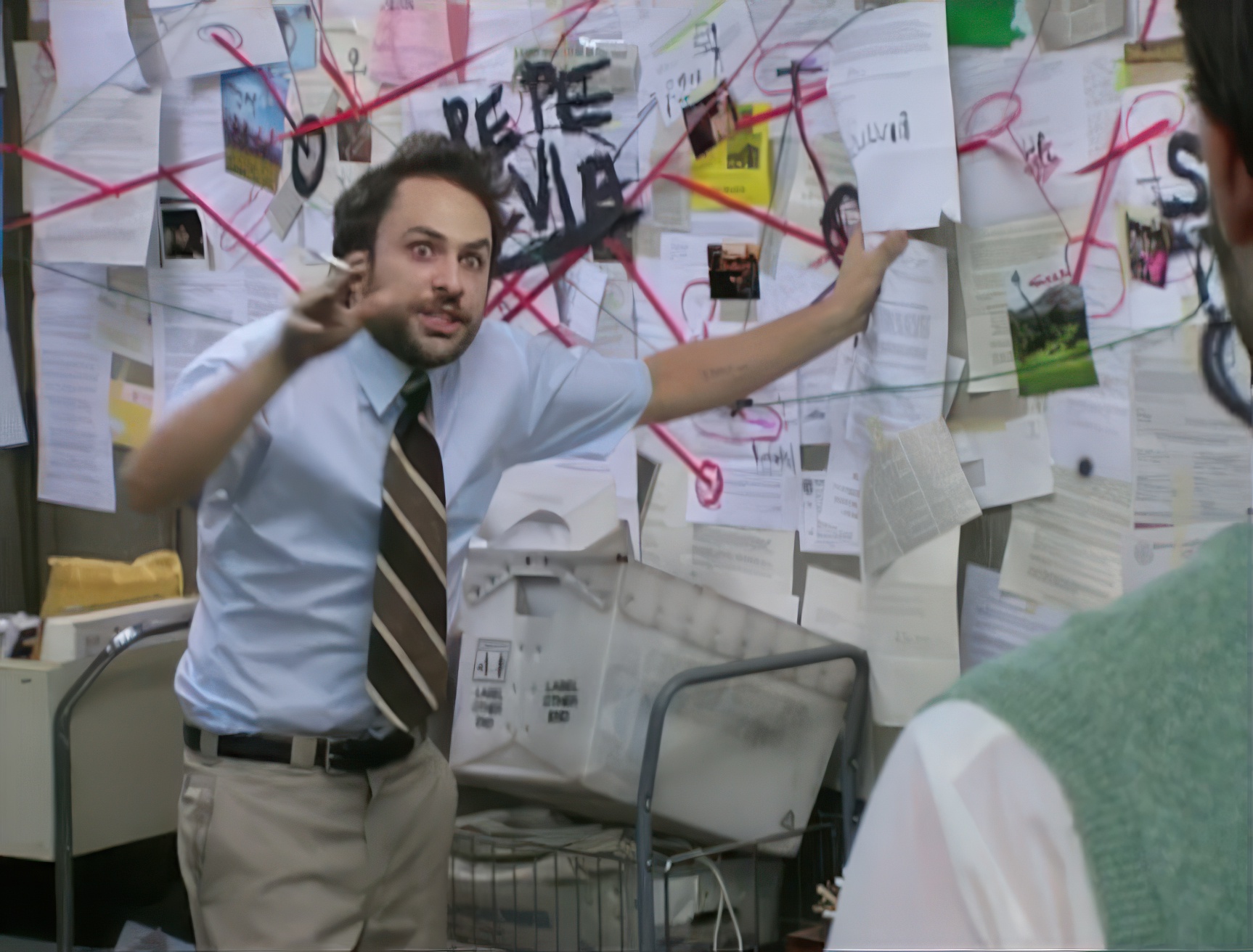

I use AI to write strategy docs all the time. I’m doing it right now, with this post. The way I think about it: there are two kinds of strategic documents, and they need different treatment. The first kind is a thinking tool, like a war room wall. You put things up, stare at them, move them around, argue about them, and throw them away when the operation moves to the next phase. AI is great for these. They’re point-in-time artifacts for clarifying your own thinking. The second kind is a capital allocation memo: where we’re playing, how we’re winning, what we’re building this quarter and why. These need to be short, precise, and scrutable. Every sentence should be load-bearing. If it takes longer than 15 minutes to read, it’s too long. The failure mode is treating the first kind like the second. When someone takes the war room wall (the 25-page AI-assisted brainstorm), binds it, and calls it “the strategy doc.” When “read the docs” replaces “let’s talk about what we’re building.” When the thinking tool becomes a substitute for the conversation it was supposed to facilitate.

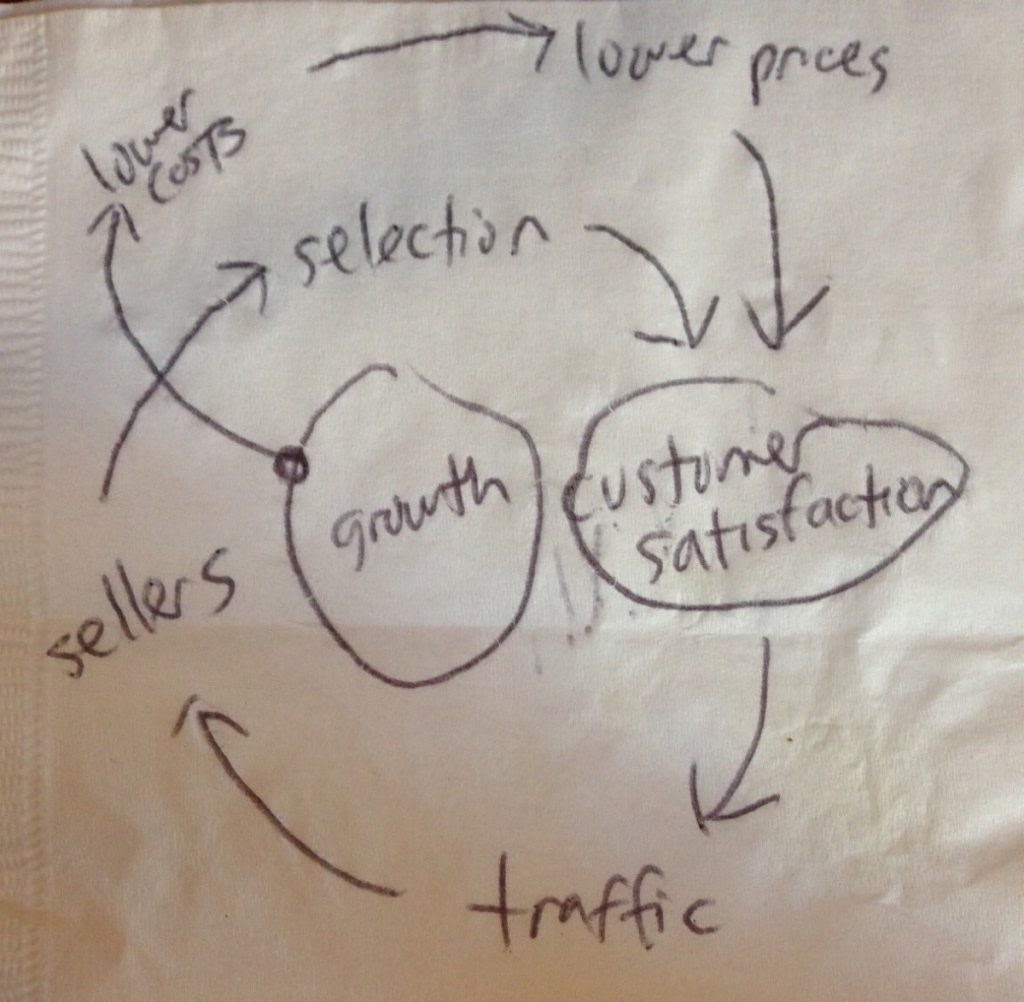

Two pages. One napkin. A trillion-dollar company.

Two pages. One napkin. A trillion-dollar company.

The obvious counterargument: Amazon does the six-page memo and it works. Everyone sits in a room, reads silently for 30 minutes, then discusses line by line. That’s a long document with real alignment. But notice what makes it work: the meeting. The silent reading. The line-by-line interrogation. In Working Backwards, Colin Bryar describes asking Bezos how he consistently found insights in memos that nobody else caught. Bezos’s answer: “he assumes each sentence he reads is wrong until he can prove otherwise.” The document is a prop for that level of scrutiny, not a replacement for it. Are people doing that with 25-page AI-generated strategy docs? I seriously doubt it. If your team has the discipline to do that with a 25-page AI-generated strategy doc, maybe the length isn’t the problem. But most teams don’t. They send the doc in Slack, ask for async feedback, and move on. The alternative is that the strategic leaders in the company do the deep thinking themselves, then expose only the load-bearing conclusions to the broader team. That’s closer to how most fast-moving startups actually operate: alignment on the what, autonomy on the how.

The weakness AI introduces is letting you mistake volume for substance. A 25-page strategy doc feels like a big strategy for a big business. But the length doesn’t come from deeper thinking; it comes from cheaper writing. We already know how to write concise strategy docs. We’re just not doing it. I think it’s excitement. AI changes so many rules that we assume it changes all of them. “We used to be limited to three pages because writing was expensive. Now we can afford to be comprehensive.” It feels like a capability upgrade. Mario’s argument is that it’s not, and I agree.

The more honest version: it’s addictive. The next big strategic insight is one prompt away. You feed your thesis to the chatbot, it generates a new framework, the framework has implications, the implications suggest a new section, and now your strategy doc has a competitive intelligence appendix that didn’t exist an hour ago. Each prompt feels productive. Each new section feels like progress. It’s a slot machine that pays out in prose. If nobody reads it, nobody aligns on it, and nobody changes what they’re building because of it, then it was a private exercise that produced a public artifact. The mistake is confusing the artifact with the outcome.

We have linters for code and review norms for PRs. We have decades of practice managing the quality of what engineers produce. We have almost no equivalent for what AI helps everyone else produce. Strategy docs, planning artifacts, competitive analyses, internal memos: all of it is growing faster than our ability to consume it. The old constraints exist. The discipline to reimpose them is the hard part.

Process note: This post was developed from voice notes and conversation with Claude Code, responding to Mario Zechner’s “Thoughts on Slowing the Fuck Down”. AI was used for research, structural editing, and drafting. All claims and opinions are mine; mistakes are mine.